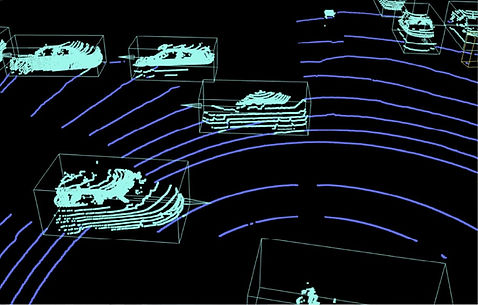

3D LiDAR Point Cloud

Annotation Services

Expert 3D sensor fusion data annotation services that enable your computer vision and 3D perception systems to accurately perceive and interact with the world.

Service Options

3D LiDAR point cloud data annotation services

Precision 3D annotation that transforms LiDAR data into clear, accurate, ready-to-train datasets for next-generation perception systems.

3D cuboid annotation

Precise 3D cuboids (3D bounding boxes) that capture object position and dimensions with exceptional accuracy.

Autonomous driving obstacle detection

Logistics sorting

Security systems

Robotic grasping

Highlights

Why choose BasicAI

Trusted provider of training data for Fortune 500 companies and leading AI research teams worldwide.

Proprietary platform

AI-enhanced image data labeling and quality inspection tools that accelerate annotation while improving precision.

Efficient project management and proprietary tools deliver industry-leading results.

10x

faster image labeling

7+

years of expertise

99%+

quality assurance

300k+

datasets delivered

Use Cases

LiDAR point cloud annotation for AI in every industries

Volvo Construction Equipment

Quick communication, seamless adaptation to our evolving data formats, and excellent annotations despite the noisy data lacking corresponding figures. Their professionalism and technical skill made them a valuable partner."

Mohammad Loni

Senior AI Research Engineer, Volvo CE, Sweden

Process

An efficient and seamless project process

01

Validation

Free sample annotations tailored to your machine learning objectives ensure perfect alignment.

02

Onboarding

Clear communication of requirements creates a foundation for exceptional quality in every task.

03

Production

Meticulous annotation with multi-layered verification delivers results that exceed expectations.

04

Refinement

Projects complete only when they meet both your standards and ours, with continuous improvement built in.

Frequently Asked Questions

If you have further questions, please feel free to contact us at sales@basic.ai